If you keep running into Proxy Error 429 on Janitor AI or while using proxies, you’re definitely not the only one. Many users feel stuck because the error shows up without warning. Everything seems to work normally at first, then your requests suddenly stop going through. Conversations freeze, retries fail, and the entire experience feels unstable and unpredictable.

Proxy Error 429 appears for clear and specific reasons. It is the server’s way of signaling that your request pattern looks too fast or too frequent. The issue becomes even more common when traffic passes through shared proxy IPs or when the upstream AI provider is under heavy load. To help you regain a smooth and reliable experience, this guide breaks down the causes behind the 429 response, why Janitor AI users encounter it so often, and the working fixes you can apply right away.

📖 Read Also:

Error Occurred While Proxying Request: Causes and Fixes

How to Fix “Access via Proxy/VPN/Tor Is Not Permitted” Error

Proxy Error 429 appears when a server concludes that incoming requests are arriving too quickly. The message is simple, but the underlying causes are often diverse. Understanding these triggers helps maintain stable connections, especially when using proxies, automation tools, or AI platforms.

Servers enforce rate limits to protect resources. They track not only the number of requests but also the pace at which they arrive. A short burst of rapid calls can trigger a 429 response even when the total volume is low.

This often occurs when multi-threaded crawlers start at the same time or when scripts loop without pauses. These bursts can use up an IP or API key’s allowance in seconds, and they can trigger 429 errors even without a proxy involved.

Proxies add additional risks. Shared IPs merge traffic from many users, and if the combined activity exceeds limits, every user behind that address is restricted.

Dirty or recycled IP pools create similar problems. These IPs may carry a history of aggressive use, causing servers to apply tighter thresholds immediately. Rotation strategy also matters. Limited rotation concentrates too much activity on one IP, while overly aggressive rotation creates patterns that do not resemble normal user behavior.

Automated tools often produce request rhythms that differ from human behavior. Scripts that run without pauses or repeat the same sequence create predictable patterns that detection systems can identify quickly.

Even moderate automation can trigger 429 errors when timing becomes too consistent. The issue is often the regularity of the rhythm rather than the total number of calls.

Modern platforms evaluate the quality of each request. Incomplete headers, unstable cookies, or inconsistent browser fingerprints lower the trust assigned to a session.

When each request appears to come from a new user or the fingerprint shifts frequently, systems become more cautious. They may not block access entirely, but will enforce stricter limits, causing 429 errors to appear earlier than expected.

Many services apply limits at the account level instead of the IP level. API keys often include strict per-minute or per-day quotas. Once those limits are reached, the server returns 429 responses until the quota resets.

Rate-limit headers typically indicate remaining allowance and reset timing. When these signals are overlooked, users may assume the proxy is responsible even though the restriction comes from account-level usage.

Janitor AI users encounter Proxy Error 429 more often not because of a single issue, but because of how the platform routes each request. Messages move through several services, and every layer has its own limits, queue rules, and capacity thresholds. When any part of this chain slows down, the delay spreads to the entire path. Understanding this structure explains why 429 errors appear frequently, even during normal usage.

A message sent through Janitor AI passes through multiple systems: Janitor AI, then OpenRouter, then the upstream model provider, such as OpenAI. Each layer operates with its own queue and rate-limit policies. When the upstream provider experiences a heavy load, the slowdown propagates back through OpenRouter and eventually reaches Janitor AI.

In these cases, Janitor AI returns the upstream response directly, which often resembles a standard 429. The restriction may come from several steps away, rather than your own requests. This multi-layer design explains why 429 errors can appear even when your activity is moderate and your proxy setup is stable.

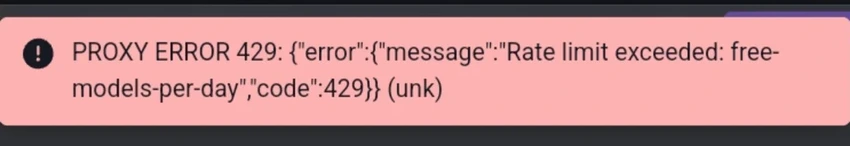

AI providers consistently prioritize paid traffic over free access, and Janitor AI follows the same pattern. Free-tier requests are handled on a best-effort basis and placed behind higher priority queues. During busy periods, the spacing between low-priority requests widens to keep performance stable.

As demand increases, free sessions hit rate limits sooner, even with normal usage. This reflects Janitor AI’s traffic management rather than any misuse. It also explains why changing IPs or proxies rarely reduces 429 frequency for free-tier users, since the limitation is tied to account priority rather than network identity.

Usage patterns on Janitor AI can also amplify 429 responses. Long prompts require more computation, keeping the upstream model busy for longer and reducing available capacity.

When responses take longer to appear, users often click retry while the original request is still processing. These overlapping retries create small bursts of traffic that increase rate-limit pressure. Combined with high model load, even a few rapid attempts can exceed what the system can handle comfortably and lead to repeated 429 errors.

👍 You may also like:

Proxy Error 429 can appear at different points in the request chain, but most cases can be improved by adjusting how requests are sent and how identity signals are managed. The methods below focus on creating a traffic pattern that servers accept more reliably. Each section includes practical guidance and Python examples you can adapt to your own scripts or workflows.

Adding short delays between requests is one of the most reliable ways to reduce repeated 429 responses. Servers respond better to slower and slightly irregular traffic because it resembles normal user behavior rather than automated bursts.

A practical approach is to add a small random pause between calls, usually three to eight hundred milliseconds. The randomness helps break fixed rhythms that detection systems flag easily.

When a 429 response includes a Retry-After header, follow it closely. This value tells you how long the server needs before accepting another request. Ignoring it often leads to repeated blocking and longer recovery times.

Example: use random delay and handle Retry-After

import requests

import time

import random

def safe_get(url, headers=None, max_retries=3):

retries = 0

while retries <= max_retries:

response = requests.get(url, headers=headers, timeout=15)

if response.status_code == 429:

retry_after = response.headers.get("Retry-After")

if retry_after:try:

wait_seconds = int(retry_after)except ValueError:

wait_seconds = 10

else:

wait_seconds = 10

print(f"Hit 429, waiting {wait_seconds} seconds before retrying")

time.sleep(wait_seconds)

retries += 1

continue

if response.status_code != 200:

response.raise_for_status()

return response

raise RuntimeError("Exceeded maximum retries after repeated 429 responses")

urls = ["https://example.com/api/1", "https://example.com/api/2"]

for url in urls:

resp = safe_get(url)print(f"Status {resp.status_code} for {url}")

time.sleep(random.uniform(0.3, 0.8))Even with a moderate number of requests, sending many calls at the same moment creates short spikes that servers interpret as unsafe. Reducing concurrency spreads traffic across time and lowers the chance of triggering limits.

Scripts that use threads or asynchronous patterns often benefit from a smaller worker pool. Combined with light pauses between calls, this produces a more stable flow that avoids dense clusters of activity.

Example: reduce thread count and avoid burst patterns

import requests

import time

import random

from concurrent.futures import ThreadPoolExecutor, as_completed

urls = [f"https://example.com/api/{i}" for i in range(1, 21)]

def fetch(url):

response = requests.get(url, timeout=10)

if response.status_code == 429:print(f"Got 429 on {url}, backing off for 5 seconds")

time.sleep(5)return fetch(url)

response.raise_for_status()

time.sleep(random.uniform(0.2, 0.6))return url, response.status_code

results = []

with ThreadPoolExecutor(max_workers=3) as executor:

futures = {executor.submit(fetch, url): url for url in urls}

for future in as_completed(futures):

url = futures[future]try:

u, status = future.result()

results.append((u, status))print(f"{u} completed with status {status}")except Exception as e:print(f"Request for {url} failed: {e}")This approach limits instantaneous pressure without requiring major changes to the overall code structure.

Proxy configuration influences how often 429 errors appear. Slow rotation concentrates many requests on one IP. Rotation that is too frequent creates a stream of short-lived identities. A balanced approach is more reliable. Occasional rotation avoids repeated limits on a single IP, while sticky sessions preserve continuity for login flows or long conversations.

Residential IPs often reduce 429 frequency because they align more closely with normal household traffic. Pools that refresh regularly further reduce the chance of encountering recycled or pre-flagged addresses.

Example: basic proxy rotation strategy

import requests

import time

import random

proxy_pool = ["http://user:[email protected]:PORT","http://user:[email protected]:PORT","http://user:[email protected]:PORT",

]

def get_session_with_proxy(proxy_url):

session = requests.Session()

session.proxies = {"http": proxy_url,"https": proxy_url,

}return session

urls = [f"https://example.com/api/{i}" for i in range(1, 11)]

for url in urls:

proxy = random.choice(proxy_pool)

session = get_session_with_proxy(proxy)

try:

response = session.get(url, timeout=15)

if response.status_code == 429:print(f"429 on {url} with proxy {proxy}, backing off")

time.sleep(5)continue

response.raise_for_status()print(f"Success for {url} through {proxy}")except Exception as e:print(f"Request failed for {url} through {proxy}: {e}")

time.sleep(random.uniform(0.5, 1.5))Stable results also depend on access to a clean and frequently refreshed residential pool. With IPcook, you receive the gateway address, port number and account credentials, and the system assigns your traffic to a clean rotating residential pool automatically. This provides a steadier identity pattern and helps reduce the rate-limit responses that often occur during scraping, automation or Janitor AI sessions.

Proxy Error 429 appears when a server needs your traffic to slow down or follow a steadier rhythm. The triggers range from short bursts and automation patterns to upstream congestion inside Janitor AI’s routing path. Even normal usage can hit these limits when requests cluster too closely or when identity signals shift in ways the system interprets as unusual.

Most solutions center on shaping a smoother pattern. Small pauses, reduced concurrency, and consistent session behavior help servers process requests without tightening their limits. Clean residential traffic strengthens this effect by providing a connection profile that looks natural and remains stable over time.

IPcook supports this approach by providing easy access to a refreshed residential pool that keeps traffic stable and reliable for scraping, automation, and Janitor AI workflows. If you need a cleaner and more consistent route, you can try setting up a residential connection through IPcook to see the improvement.