Top 5 Best Web Data Scraping Services: Compare & Choose

Now, businesses across industries are turning to web scraping service providers to gain real-time insights into markets, competitors, and customer behavior. Whether it's tracking product prices, monitoring brand mentions, or aggregating content at scale, the right service can turn the chaotic web into a structured, valuable data stream. However, choosing a website scraping company isn't just about automation, but about reliability, scalability, compliance, and expert support.

From high-performance proxy networks to fully managed scraping solutions, this post compares the best web data scraping services, including our top pick of proxy provider, IPcook, helping you choose the solution that best fits your business goals. Whether you're an e-commerce seller needing dynamic price tracking, a data analyst sourcing structured datasets, or a startup looking for a scalable and compliant data pipeline, just scroll down and check them out!

👀 More Info About Data Scraping:

What to Look for in a Website Scraping Company

Choosing the best web data scraping services requires identifying a reliable website scraping company that aligns with your technical needs and compliance requirements. Key criteria include:

Data Quality: Accurate, deduplicated, and structured output in formats like JSON or CSV.

Compliance & Ethics: Adherence to data privacy laws (e.g., GDPR, CCPA) and robots.txt policies.

Anti-Bot Bypass Capabilities: Use of residential IPs, headless browsers, and CAPTCHA solvers to access protected content.

Scalability: Ability to handle large-scale tasks and multi-source scraping across regions.

Technical Compatibility: Support for APIs, webhook delivery, cloud export, or custom integrations.

Service & SLA: Clear uptime guarantees, fast response times, and ongoing technical support.

By assessing providers through these lenses, you can confidently invest in a solution that delivers both performance and peace of mind, setting the stage for our top recommendations below.

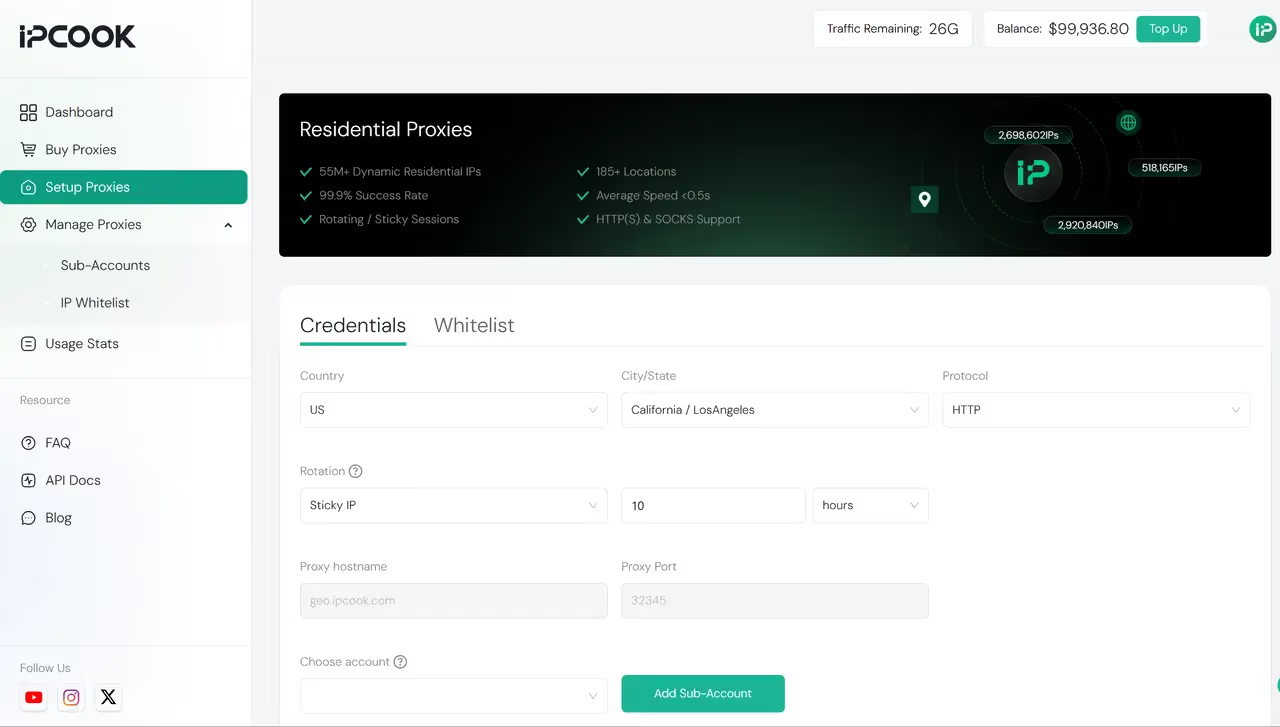

👍 IPcook – Best Proxy Provider for Web Scraping Service

IPcook is the best proxy provider for achieving the best web scraping service. It offers a robust, dynamic residential IP network with real-time rotation and traffic-based pricing, ensuring stable access to target websites, even those with aggressive anti-scraping systems. From extracting product listings on Amazon and other global e-commerce platforms to monitoring regional ad campaigns or scaling account automation across social networks, IPcook offers the stability and performance that complex scraping projects require.

In addition to its massive pool of clean, real, dynamic residential IPs, minimizing detection and maximizing success, IPcook supports seamless integration with popular scraping tools and frameworks. It also offers a customizable API tailored to specific data workflows, allowing you to automate and scale your operations with precision. Whether you're running high-concurrency crawlers or mission-critical pipelines, IPcook delivers higher throughput, lower block rates, and better cost-efficiency for long-term success.

Pros

Access to millions of ethically-sourced residential IPs across countries, ensuring low ban rates and high success in bypassing anti-bot systems.

Only pay for the bandwidth you use, making it cost-effective for both small-scale tasks and high-volume scraping pipelines.

Fully compatible with Python-based tools and browser automation libraries.

Especially effective for scraping JavaScript-heavy or geo-restricted websites that block traditional datacenter proxies.

Cons

More traffic may require paid plans.

IPcook's combination of dynamic residential IPs, scalable infrastructure, and flexible billing makes it a top partner with the best website scraping software for businesses aiming to efficiently and reliably extract large volumes of data.

Top 1. Scrapy - Premier Open-Source Framework for Developer-Led Web Scraping

Engineered for developers prioritizing granular control, Scrapy delivers a high-performance Python framework for sophisticated web scraping. Its asynchronous architecture enables precise definition of crawling logic, data parsing rules, and structured output formats. The modular design and vibrant community cement Scrapy as the preferred solution for constructing complex, large-scale data extraction pipelines.

From scraping ecommerce websites and indexing entire domains to database integration, Scrapy's extensibility excels. It seamlessly integrates with proxy services like IPcook, facilitating high-concurrency requests and circumventing IP blocks via residential proxies. This positions Scrapy as a robust backend engine for both lightweight scripts and enterprise data operations.

Pros

Zero-Cost & Open-Source: Community-driven development with no licensing fees

Unmatched Customization: Full pipeline control from middleware to output handlers

Asynchronous Speed: Native concurrency via Twisted for rapid data extraction

Proxy Scalability: Effortless integration with rotation tools like IPcook

Expansive Ecosystem: Extensions for caching, throttling, and exports (JSON/CSV/databases)

Cons

Python Expertise Required: Steep learning curve for non-developers

No Visual Interface: Lacks a GUI, necessitating code-based configuration

Complex Initial Setup: Significant time investment for spider/pipeline creation

External Anti-Bot Dependence: Relies on proxies/headers for CAPTCHA/bot mitigation

Top 2. ScrapeHero - Best Custom Web Scraping Service

ScrapeHero positions itself as a top-tier web scraping service provider that specializes in fully customized data extraction solutions. Unlike self-service tools or generic APIs, ScrapeHero builds tailored web scraping pipelines for each client, handling everything from website analysis to data delivery. It is ideal for businesses with unique or complex requirements, such as aggregating product data across niche marketplaces, collecting location-specific search results (e.g., Bing scraping, Google SERP scraping, or Google Maps data collection), or extracting structured content from semi-structured pages.

The company supports multiple delivery formats (JSON, CSV, API endpoints), scheduled scraping, and cloud-based infrastructure to ensure scalability and reliability. Its services include anti-bot evasion, CAPTCHA solving, and proxy management, reducing the need for internal maintenance. For businesses looking to outsource entire scraping operations to a dedicated team, ScrapeHero is one of the best web data scraping services available.

Pros

Fully managed, tailor-made scraping pipelines

Supports complex websites and anti-bot protections

Structured data delivered via APIs or scheduled exports

Hands-off solution with enterprise-grade support

Cons

Less suitable for users seeking DIY or self-serve options

Project timelines may vary depending on customization needs

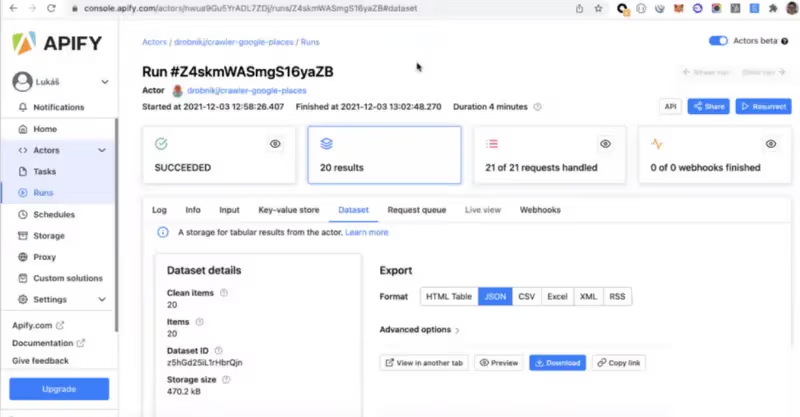

Top 3. Apify - Best for Complex Automation and Integration

Apify provides an advanced web scraping platform tailored for businesses needing automated scraping with complex workflows. Its actor-based architecture enables users to easily run custom workflows, combining data extraction, processing, and automation. With support for headless browsers like Puppeteer and Playwright, Apify efficiently scrapes dynamic pages and JavaScript-rendered content. This makes it ideal for large-scale projects involving e-commerce platforms, social media data, and API monitoring.

Beyond simple scraping, Apify excels in providing integration capabilities, including API endpoints and data processing features, making it perfect for companies that need to integrate extracted data into their existing business systems or databases. Its flexibility in managing tasks like scheduling, scaling, and logging, paired with strong automation support, solidifies Apify as a good web scraping service provider for automation-heavy tasks.

Pros

Custom workflows with actor-based architecture

Integration with Puppeteer/Playwright for dynamic content scraping

Robust API and automation features

Flexible scaling and scheduling

Cons

Requires some learning curve for automation setup

Higher pricing for smaller projects

Top 4. Browse AI - Best No-Code Web Scraping with Monitoring

Browse AI offers a no-code web scraping solution if you want to extract data from websites without writing a single line of code. Its simple, user-friendly interface enables users to train AI models to recognize and scrape pagination from websites, even those with complex layouts. In addition to basic scraping, Browse AI provides powerful monitoring capabilities, allowing users to track data changes in real time, making it ideal for applications such as price tracking, competitive analysis, and product monitoring.

What sets Browse AI apart is its ease of use, enabling non-technical users to set up and run scraping tasks with minimal effort. It supports automated data extraction and provides downloadable results in formats like CSV or JSON. Browse AI is perfect for small-to-medium businesses that require ongoing monitoring of specific data sources, without the need for extensive technical expertise.

Pros

No-code platform for easy setup

Real-time data monitoring and alerts

Supports structured data extraction

Suitable for non-technical users and small businesses

Cons

Limited customization compared to more advanced tools

May struggle with websites that require advanced anti-scraping measures

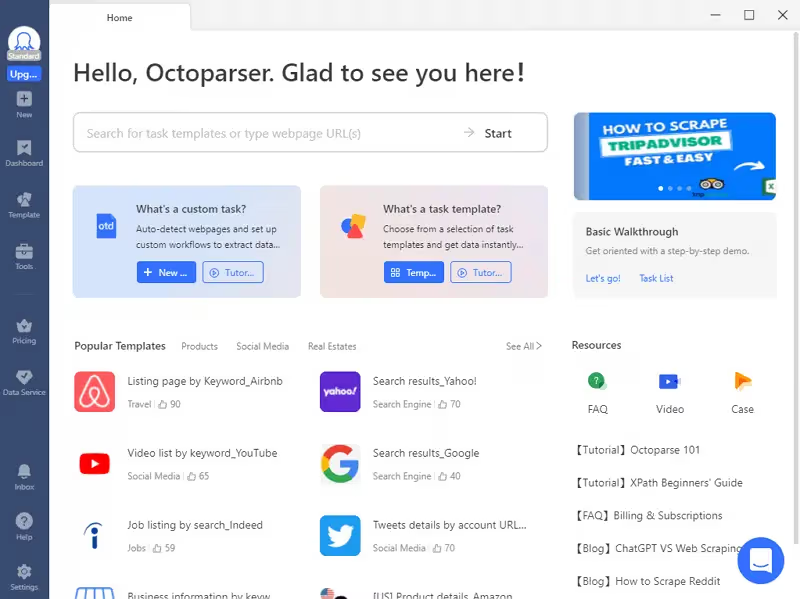

Top 5. Octoparse - Premier No-Code Web Scraping Solution for Beginners

For users seeking intuitive web scraping tools with zero coding, Octoparse stands out as a premier visual data extraction platform. Featuring drag-and-drop functionality and a streamlined interface, it enables point-and-click data harvesting from websites without programming knowledge. This solution is tailor-made for digital marketers, content teams, and e-commerce professionals – perfect for gathering product catalogs or tracking social media content.

The platform excels at scraping dynamic content (handling AJAX/JavaScript pages) through its integrated browser environment. Ready-made templates accelerate data extraction from popular sites, while seamless proxy integration enhances success rates for large-volume operations by preventing IP restrictions.

Pros

Intuitive visual workflow designer (no coding)

Dual cloud/local execution options

Built-in browser for JavaScript content and logins

Pre-built templates for e-commerce/social platforms

Proxy integration supports (IPcook compatible)

Cons

Limited advanced logic implementation

Cloud processing delays during peak loads

Instability with large-scale operations

Tiered support response times

Side-by-Side Comparison: Top Web Scraping Services at a Glance

To help you make an informed decision, let's compare the key features of the top web scraping services available. In the table below, we've highlighted the strengths of each provider, including pricing details, so you can pick the most cost-effective one for data parsing based on your needs.

Feature | Scrapy | ScrapeHero | Apify | Browse AI | Octoparse |

|---|---|---|---|---|---|

Clean, Hard-to-Detect IPs | ❌ No (Requires external proxy setup) | ✅ Yes (Dedicated proxies) | ✅ Yes (Headless Browsers) | ❌ Limited — basic built-in proxy | ❌ No (Needs external proxies for stealth) |

Suitable for Large-Scale Data Scraping | ✅ Yes (Highly scalable with coding & infra) | ✅ Yes (Custom solutions) | ✅ Yes (Scalable Automation) | ❌ Limited to smaller-scale projects | ✅ Yes (With correct proxy & scheduling setup) |

Managed Scraping Available | ❌ No (Open-source, self-managed) | ✅ Yes (Custom services) | ✅ Yes (Automated workflows) | ✅ Yes (Monitoring & Alerts) | ✅ Yes (Enterprise plan offers managed service) |

Website Scale/Type | ✅ Ideal for any site type (needs plugins for dynamic) | ✅ Ideal for complex sites & APIs | ✅ Ideal for dynamic, multi-step scraping | ✅ Best for small to medium businesses | ✅ Ideal for e-commerce, social, and directory scraping |

Legal Compliance Support | ❌ No built-in legal compliance | ✅ Yes (Compliant with laws) | ✅ Yes (Compliant with legal needs) | ❌ Limited legal guarantees | ❌ Basic TOS, no formal legal guarantee |

Automation & Customization | ✅ High (Custom spiders, middleware) | ✅ Moderate (Custom scraping solutions) | ✅ High (Puppeteer/Playwright support) | ✅ Moderate (Pre-built templates) | ✅ Moderate (Drag-and-drop workflows + scripting) |

Final Thoughts

When selecting a web scraping service provider, different businesses will have varying needs, and therefore, the right service will depend on specific requirements. Whether you're looking for high-volume data extraction, scraping of dynamic content, or a fully managed service, there's a solution that fits. However, the most successful web scraping partnerships are built on three key pillars: reliability, technical expertise, and legal compliance.

IPcook stands out as an excellent proxy provider choice for businesses that require flexibility, robust anti-blocking capabilities, and cost-effective pricing. Its combination of dynamic residential IPs and flexible traffic-based billing ensures that it can support both small and large-scale data collection tasks efficiently, while maintaining the highest standards of data integrity and security.